Munro Richardson devoured the reading research. He immersed himself in how literacy is taught in classrooms. He pored through databases of curricula and programs, like the What Works Clearinghouse and the Florida Center for Reading Research.

He became obsessed, the Read Charlotte executive director said, with finding an answer for turning around persistently low reading proficiency scores among elementary students nationwide.

“What works?” he asked. “We’ve tried a lot of things, but we haven’t seen the needle move.”

The question is top of mind in North Carolina, which is just beginning implementation of a new reading law that mandates training and teaching be grounded in the science of reading, a vast body of recent and decades-old scientific research on how the brain learns to read.

While Richardson hopes the new approaches will translate to better results, he’s seen well-intentioned policy fall short before. He’s studied those failures, hoping to learn from past missteps. His investigation unearthed more questions, and a possible solution.

This past year, Read Charlotte has lifted up a literacy platform called A2i and is leading efforts to pilot a related mobile app as a home-based solution for unfinished learning and summer slide in Mecklenburg County.

“I have no doubt you can change teacher practices, because we’ve done it before,” Richardson said. “But changing teacher practices that lead to changes in student outcomes is hard. But that’s what this can do.”

Finding Carol Connor

Richardson has been studying and tracking the research which drives A2i for four years, ever since Harvard professor and literacy expert James Kim mentioned the name Carol Connor to him.

Connor, who passed away in 2020, spent more than a decade looking at why reading research wasn’t translating to instructional practice. She published a series of journal articles on her study methods and findings.

Richardson read with amazement – mostly at the fact that he’d never seen it before.

“And I started to realize, ‘Whoa, there’s more out there than what I’m seeing here’ — which, on the one hand, was frightening,” Richardson said. “But, on the other hand, it gave me hope, because I looked at a lot of stuff, and I’d seen very few things that we thought, ‘OK, this is a home run.’”

What struck Richardson most about Connor’s research was the potential to bridge the gap between research on how kids learn to read and practical approaches to how teachers should teach. For example, the simple view of reading says that reading comprehension is the product of both decoding and language comprehension.

“OK, great, we know how kids learn to read, but we’ve got to teach them to read,” Richardson said. “And so what folks have been trying to do is really stretch the simple view beyond which the research underneath it was able to tell you, ‘OK, this is what you should do.’”

This, Richardson said, has sometimes led to ineffective instructional practices that aren’t supported by research. Connor’s research, he said, goes beyond what the simple view says and delves into what that means for the classroom.

Connor and her team conducted a series of observational studies — totaling 2,000 hours over five years — looking at what teachers and kids did in reading classrooms.

They used beginning-of-year assessments to measure each student’s strengths and weaknesses in different areas of reading development, tracked how many minutes of instruction each student received in different reading blocks, and cross-referenced the information with end-of-year assessments to see how the minutes and types of instruction impacted growth or decline over the year.

She co-authored her first research on this topic in 2004, “Beyond the Reading Wars: Exploring the Effect of Child–Instruction Interactions on Growth in Early Reading.”

She broke down how many minutes of instruction each student received in different critical components of reading instruction and then ran regression studies to find out what best predicted grade-level reading proficiency. One thing she did was break these findings into two types of instruction — code-based (like decoding) and meaning-based (like language comprehension).

“There is a focus on both decoding and meaning-focused,” said Ann Schulte, a professor emeritus in North Carolina State University’s psychology department who worked with Connor. “Some kids don’t need the help in decoding. Well, they take the assessment and now you know that, so you don’t drill them to death with the phonics. They can move ahead. But you scaffold for the kids who need it.”

Why Connor’s research matters in N.C. classrooms

Schulte believes the ability to predict how much code-based versus meaning-based instruction a child needs is critical for the ultimate goal of reading comprehension. Otherwise, too much emphasis may be placed on decoding.

“Phonics and teaching decoding is important, but it’s low-hanging fruit,” she said. “We’re still not quite there, but you can teach that. And you can teach that in discrete pieces. But once you can decode, you have to have the background knowledge and you have to have the vocabulary to understand what you read.”

Schulte said that typical texts K-3 students work with during decoding instruction don’t challenge comprehension skills like texts students encounter in, say, fourth and fifth grade. So younger students may flourish with decoding while background knowledge or vocabulary issues remain hidden. But A2i’s assessment would identify and work on these gaps.

In addition to breaking instruction down between code-based and meaning-based instruction, Connor’s research breaks each of these into two more dimensions: adult-managed and child-managed, which are essentially teacher-led and student-led instruction, respectively.

“First of all, she found children typically need increasing amounts of child-managed instruction as they go through the school year,” Richardson said. “So what materials we put in front of them becomes really important. If they’re not getting enough child-managed, they’re not going to be able to reach their full potential.

“Second, she found that teacher-managed instruction in small group was four times more effective than whole group instruction. So you put those things together, and you start to get a more nuanced perspective.”

Connor published an article about these four dimensions in 2014, titled “Individualizing Teaching in Beginning Reading.”

With funding from the U.S. Department of Education, Connor’s team created an algorithm using the observational research that determines how many minutes in each of the four different dimensions of instruction a child needs to develop toward proficiency. She tested it through seven randomized controlled trials.

Connor co-founded a company, Learning Ovations, to commercialize the algorithm. With an expansion grant through the Department of Education, Learning Ovations is now replicating the seven trials by tracking data in 17 school districts around the country.

How it looks in the classroom

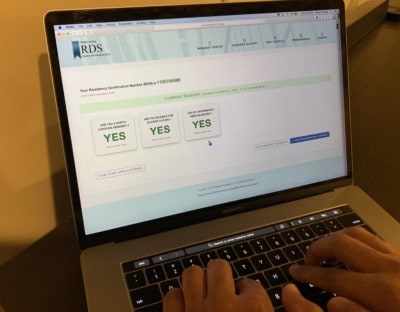

The algorithm now exists within the A2i platform. As often as every six weeks, a student would take a 15-minute assessment, and, using the data collected, A2i tells a teacher how many minutes that student needs in each of the four dimensions.

This allows teachers to take their training in reading instruction and marry it with practical guidance offered almost in real-time.

“You can’t mandate what matters, I guess is the saying in education,” Schulte said. “You’ve got to have the understanding and you’ve got to have the tools to know how to change.”

Connor also indexed the platform against several different curricula and programs. So along with the percentage of time a student should spend in each dimension, the teacher also gets a list of activities compatible with the curricula and programs used in the classroom.

“Why does that matter?” Richardson said. “Because we know that teachers spend about 10 to 12 hours a week looking for stuff on Pinterest, Google, Teachers Pay Teachers. And so you can remove that burden from teachers that have to do that.”

There’s another reason it matters, he says. Diagnostic assessments like Istation and mCLASS are just that — diagnostic. They tell teachers where students are stronger and weaker. They aren’t prescriptive, though, so teachers need to figure out what to do with the data.

“So you think about when you go to the doctor,” Richardson said. “They’re looking at a computer screen. You tell them your symptoms and the computer is helping to prescribe an evidence-informed course of treatment for you. Well, doctors aren’t the only ones that use decision-support systems. Farmers use it to help them with crops. Engineers use it. But with teachers, we’re expecting wizardry.”

Helping teachers, not replacing them

Richardson said the platform can do this without micromanaging teachers in their job performance. It’s the difference between a driverless car — an analogy which would completely remove the teacher from the situation — and a GPS, he says.

“It is helping the teacher actually be a better teacher by saying, here are the blind spots,” Richardson said. “It’s helping them to stay on the road. So I think of it [like] a GPS for teachers. It’s helping them to navigate each of their children to third grade reading proficiency.”

“Teachers need help translating the results,” Schulte said, “but they also need to have their knowledge respected. And that’s what I like about A2i, is it does both.”

Jo Welter is the former superintendent of Ambridge Area School District in Beaver County, Pennsylvania, an urban district home to about 2,600 kids. It was one of the seven districts in the randomized controlled trial of the A2i algorithm.

Before using A2i, Welter said, instruction in the district was mostly based on a whole language approach. After one year of the trial, Welter says her district saw more than one academic year’s worth of growth in vocabulary for K-1 students and more than one academic year’s worth of growth in reading for first graders.

The reports from her teachers were also positive.

“I would say it took at least five or six weeks, but then they were elated,” Welter said. “It was working, and they were excited because they have the kids so engaged.”

The down side? It’s a vendor. It costs money.

“But just to put this in context, if all 110 (Charlotte-Mecklenburg) schools were to use this, that would be $2.2 million a year,” Richardson said, adding that these are estimates. “So, three years to get to the third-grade outcomes, if you spend $6.6 million, we can transform literacy outcomes.”

Recommended reading